A Proximal Quasi-Newton Trust-Region Method for Nonsmooth Regularized Optimization

This was a project that I developed with Aleksander Aravkin and Dominique Orban. We attempt to tackle a very generic set of regularized problems f+h, where both may be nonconvex, and h may be nonsmooth. It is currently under review for SIAM Optimization, and ArXiv copy can be found here. I presented at the DOE CSGF Program Review and SIOPT virtually in July 2021.

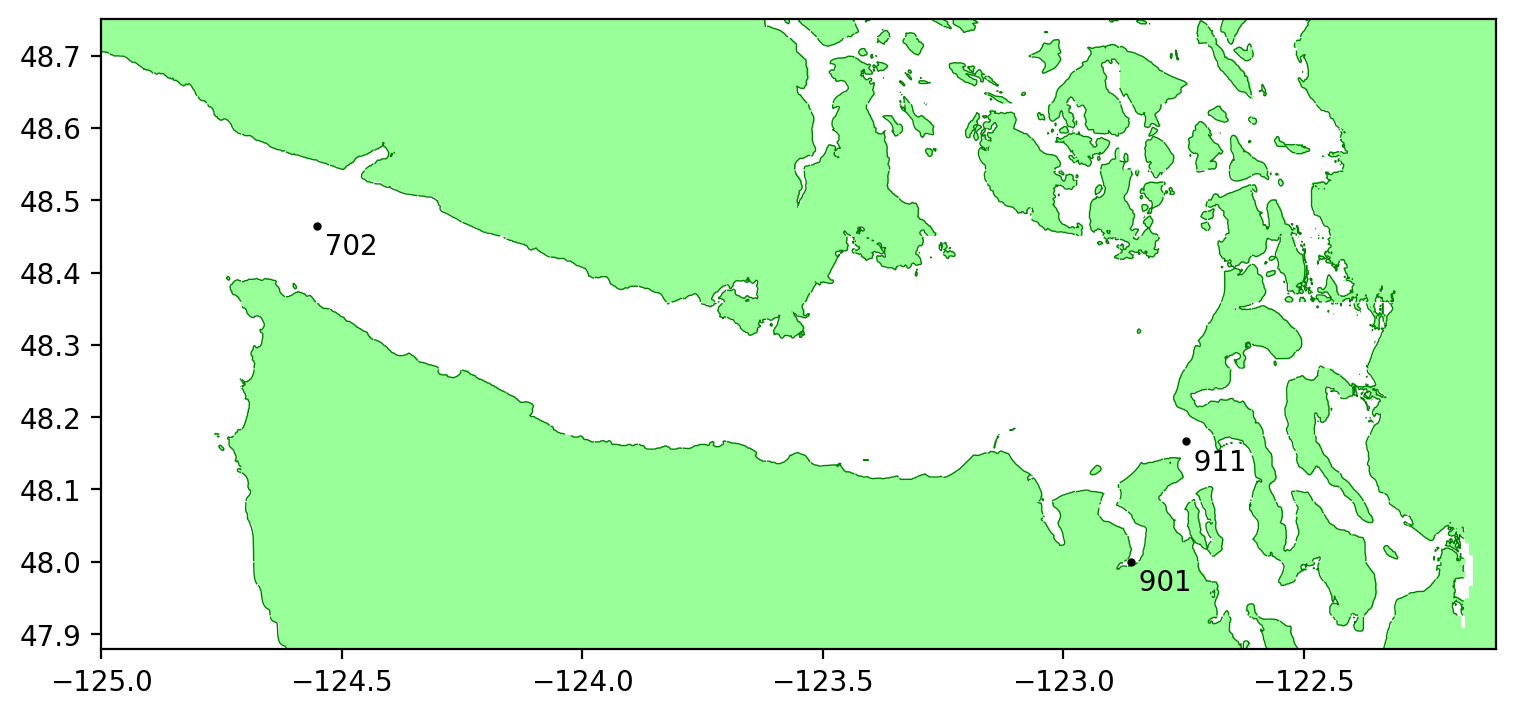

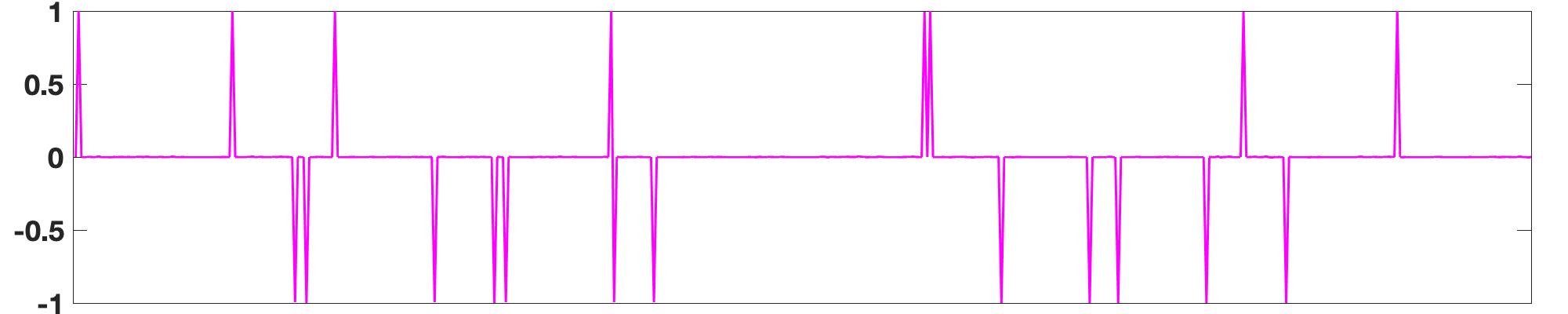

We develop a trust-region method for minimizing the sum of a smooth term f and a nonsmooth term h), both of which can be nonconvex. Each iteration of our method minimizes a possibly nonconvex model of f+h in a trust region. The model coincides with f+h in value and subdifferential at the center. We establish global convergence to a first-order stationary point when f satisfies a smoothness condition that holds, in particular, when it has Lipschitz-continuous gradient, and h is proper and lower semi-continuous. The model of h is required to be proper, lower-semi-continuous and prox-bounded. Under these weak assumptions, we establish a worst-case O(1/ϵ^2) iteration complexity bound that matches the best known complexity bound of standard trust-region methods for smooth optimization. We detail a special instance, named TR-PG, in which we use a limited-memory quasi-Newton model of f and compute a step with the proximal gradient method, resulting in a practical proximal quasi-Newton method. We establish similar convergence properties and complexity bound for a quadratic regularization variant, named R2, and provide an interpretation as a proximal gradient method with adaptive step size for nonconvex problems. R2 may also be used to compute steps inside the trust-region method, resulting in an implementation named TR-R2. We describe our Julia implementations and report numerical results on inverse problems from sparse optimization and signal processing. Both TR-PG and TR-R2 exhibit promising performance and compare favorably with two linesearch proximal quasi-Newton methods based on convex models.