Below, we present several different ways to regularize nonsmooth problems in PDE solutions. This is done with the UFL language Firedrake utilized in Python3. Note that this code comes with it no guarantee of any kind, and primarily serves as a way to combine PDE optimization with proximal-gradient methods. Here are the slides for the talk.

%matplotlib notebook

%load_ext autoreload

%autoreload 2

import numpy as np

from numpy import pi as π

import matplotlib.pyplot as plt

import firedrake

from firedrake import (inner, action, grad, dx, ds, as_vector,

Constant,sqrt,interpolate, min_value,

max_value, derivative, exp, sin, cos,

assemble, replace, norm, adjoint, dot, lt, gt, conditional)

nx, ny = 81, 81

mesh = firedrake.UnitSquareMesh(nx, ny)

V = firedrake.FunctionSpace(mesh, family='CG', degree=1)

Q = firedrake.FunctionSpace(mesh, family='CG', degree=1)

Optimization Problem

Here, we attempt to create a regularization framework for nonsmooth problems in variational analysis - the one we consider here is the obstacle problem. We consider the classic example where we find $u$ that minimizes the Dirichlet energy

\[\begin{align*}

\min_u \mathcal{J}(u) &:= \int_\Omega \left(\frac12 |\nabla u|^2 - fu\right) dx \\

\text{s.t.}\quad u|_{\partial \Omega} &= 0,\quad u\geq g \quad \text{for} \ u\in\Omega.

\end{align*}\]

Generally, our optimization problem contains convex functional $\mathcal{J}$ subject to the constraint that $u$ has to live in a closed convex set $K$ of a function space $Q$.

def Action(u):

J_elastic = 0.5 * inner(grad(u), grad(u)) * dx

return J_elastic

# Enforce boundary condition if you want

# def Action2(u, ρ):

# J_elastic = 0.5 * inner(grad(u), grad(u)) * dx

# J_boundary = ρ*inner(u, u)*ds

# return J_elastic + J_boundary

def Regularization(u, γ):

J_penalty = 0.5 / γ**2 * min_value(u - g, 0)**2 * dx

return J_penalty

#argmin_y of (1/(2γ))||u - y|| + δ(y>=v)(y) is just v when y<u or u when y>u

def proxReg(u,u2):

return firedrake.interpolate(max_value(u, u2),Q)

Obstacle Problem

In the test problem, the domain will be the unit square and obstacle function $g$ will be the upper half of a sphere.

def make_obstacle(mesh):

x = firedrake.SpatialCoordinate(mesh)

y = as_vector((1/2, 1/2))

z = 1/4

return sqrt(max_value(z**2 - inner(x - y, x - y), 0))

g = firedrake.interpolate(make_obstacle(mesh), V)

firedrake.plot(g,cmap='magma', plot3d=True)

<IPython.core.display.Javascript object>

<matplotlib.axes._subplots.Axes3DSubplot at 0x7f79ef5fb160>

While Newton’s method works well for unconstrained convex problems like PDES, it is more difficult to implement with inequality constraints. To paraphrase a previous discussion of this topic, expanding the literature to an infinite-dimensional setting is not obvious and active set methods are less than ideal in this case.

Minimizing the action functional $\mathcal{J}$ over the convex set $K$ can be rephrased as an unconstrained problem to minimize the functional

\(\mathcal{J}(u) + \mathcal{I}(u)\)

where $\mathcal{I}$ is the indicator function of the set $K$:

\(\mathcal{I}(u) = \begin{cases} 0\quad u\in K\\ \infty\quad u\not\in K\end{cases}\)

which is still convex but can take $\infty$ as a value.

Moreau-Yoshida Regularization

Smoothing the Indicator Function

We can approximate the Indicator Function with Moreau-Yoshida regularization - the envelope $\mathcal{I}$ is defined as

\[\mathcal{I}_\gamma(u) = \min_v \left(\mathcal{I}(v) + \frac{1}{2\gamma^2}\|u - v\|^2 \right)\]

Note that $\lim_{\gamma\rightarrow 0} \mathcal{I}_\gamma = \mathcal{I}$. The Moreau envelope is much easier to work with than the original functional because it is differentiable and can occasionally be computed analytically. For example, the envelope of the indicator function $\mathcal{I}$ is analytically represented with

\(\mathcal{I}_\gamma=\frac{1}{2\gamma^2}\mbox{dist}(u, K)^2\)

where $\mbox{dist}$ is the distance to a convex set. For our particular case, $K$ is the set of all functions greater than $g$, for this choice of $K$, the distance to $K$ is

\[\mbox{dist}(u, K)^2 = \int_\Omega (u-g)^2_- dx,\]

where $v_-=min(v, 0)$ is the negative part of $v$. Hence, the approach to the obstacle problem can be solving the minimizer of

\(\mathcal{J}_\gamma = \int_\Omega \left(\frac12 |\nabla u|^2 - fu\right) dx + \frac{1}{2\gamma^2}\int_\Omega (u-g)^2_- \ dx\)

as $\gamma\rightarrow 0$. Here, it is important to note that $\gamma$ has units of length, which we can set as some fraction of the finite element spacing. Then, the errors in the finite element approximation are comparable to the distance of the approximate solution to the constraint set.

This is similar to the penalty method for optimization problems with equality constraints. The practical consideration for using the Moreau envelope is that the solution $u$ only satisfies the constraints approximately. For some problems, this will have to be augmented such that the solution stays strictly feasible (ie log-barrier methods).

Newton’s Method on the smoothed cost-functional

Now make the the update function and linesearch/newton search functions

#newton search stuff

from scipy.optimize import minimize_scalar

def update_search_direction(J, u, v):

F = derivative(J, u)

H = derivative(F, u)

bc = firedrake.DirichletBC(u.function_space(), 0, 'on_boundary')

params = {'ksp_type': 'cg', 'pc_type': 'icc'}

firedrake.solve(H == -F, v, bc, solver_parameters=params)

def line_search(J, u, v):

def J_line(step):

t = firedrake.Constant(step)

J_t = replace(J, {u: u + t * v})

return assemble(J_t)

result = minimize_scalar(J_line)

assert result.success

return result.x

def newton_search(J, u, tolerance=1e-10, max_num_steps=30):

v = firedrake.Function(u.function_space())

F = derivative(J, u)

for step in range(max_num_steps):

update_search_direction(J, u, v)

Δ = assemble(action(F, v))

if abs(Δ) < tolerance * assemble(J):

return

t = Constant(line_search(J, u, v))

u.assign(u + t * v)

def continuation_search(g, γ0, num_steps, contraction=0.5):

u = g.copy(deepcopy=True)

γ = Constant(γ0)

for step in range(num_steps):

J = Action(u) + Regularization(u,γ)

newton_search(J, u)

γ.assign(contraction * γ)

return u

Initialize the values we use in the minimization

#setup and run the newton search

u_n = firedrake.Function(Q)

γ = Constant(.1)

J = Action(u_n) + Regularization(u_n,γ)

newton_search(J, u_n)

Solution

Since we ran the Newton search, see how we did and then print out how close we actually were to the obstacle.

firedrake.plot(u_n,cmap='magma', plot3d=True)

<IPython.core.display.Javascript object>

<matplotlib.axes._subplots.Axes3DSubplot at 0x7f79eca83240>

How well does it fit the obstacle?

δn = firedrake.interpolate(max_value(g - u_n, 0), Q)

print('Obstacle error: ', firedrake.assemble(δn * dx) / firedrake.assemble(g * dx))

firedrake.plot(δn,cmap='magma', plot3d=True)

Obstacle error: 0.23124433841742503

<IPython.core.display.Javascript object>

<matplotlib.axes._subplots.Axes3DSubplot at 0x7f79dfb18c18>

Choosing $\gamma$

So it looks like we didn’t do that well in actually matching the boundary conditions, and that is because the $\gamma$ parameter was chosen naively and therefore incorrectly. We can do a pretty simple linesearch to get a solid value of $\gamma$ that matches the actual obstacle problem.

import numpy as np

num_steps = int(np.log2(nx)) + 1

u_nc = continuation_search(g, 1., num_steps)

Solution with continuation $\gamma$

firedrake.plot(u_nc,cmap='magma', plot3d=True)

<IPython.core.display.Javascript object>

<matplotlib.axes._subplots.Axes3DSubplot at 0x7f79ee26fa20>

How well does it fit the obstacle?

δ_nc = firedrake.interpolate(max_value(g - u_nc, 0), Q)

print('Obstacle error: ', firedrake.assemble(δ_nc * dx) / firedrake.assemble(g * dx))

firedrake.plot(δ_nc,cmap='magma', plot3d=True)

Obstacle error: 0.007477029545058705

<IPython.core.display.Javascript object>

<matplotlib.axes._subplots.Axes3DSubplot at 0x7f79eea13828>

Here, we want to test the effect of changing the grid and actually fitting over the obstacle.

# coarse_mesh = firedrake.UnitSquareMesh(nx, ny, quadrilateral=True)

# num_levels = 4

# mesh_hierarchy = firedrake.MeshHierarchy(coarse_mesh, num_levels)

# for level, mesh in enumerate(mesh_hierarchy):

# Q = firedrake.FunctionSpace(mesh, family='CG', degree=1)

# g = firedrake.interpolate(make_obstacle(mesh), Q)

# num_continuation_steps = int(np.log(nx)) + level + 1

# u_nmesh = continuation_search(g, 1, num_continuation_steps)

# print(assemble(max_value(g - u_nmesh, 0) * dx))

First Order Methods

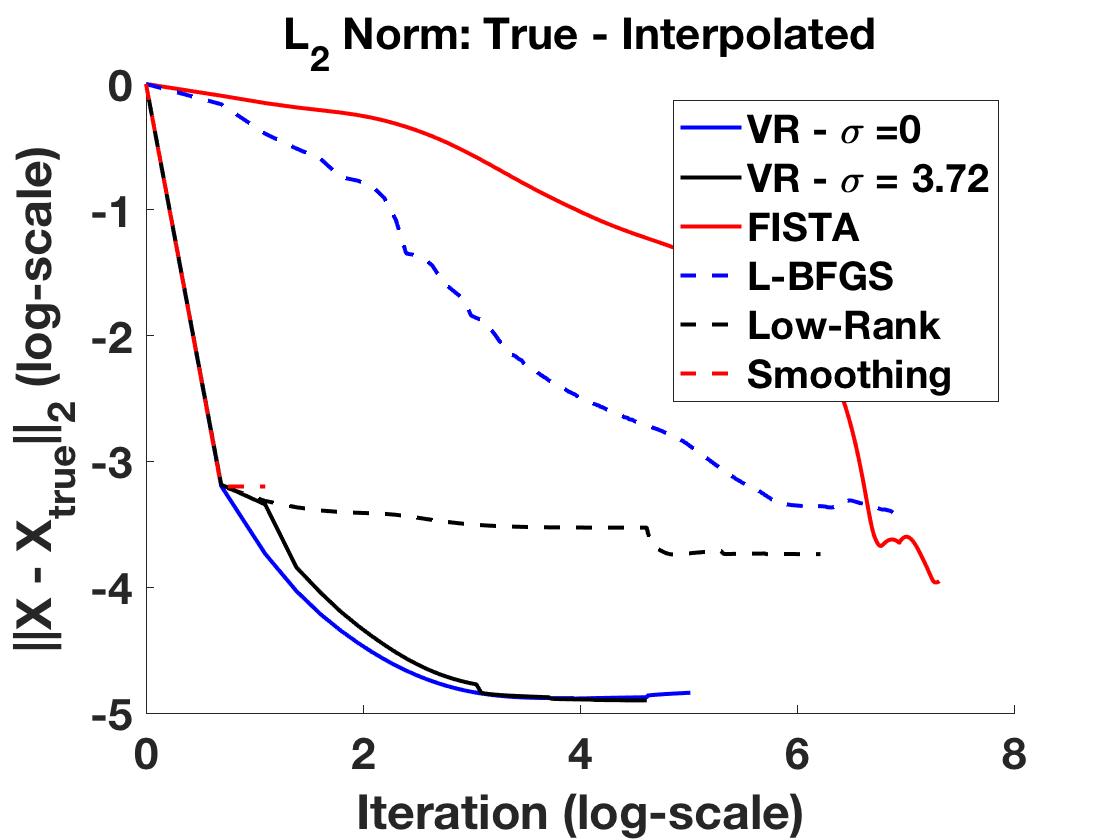

So we can probably do a little bit better in borrowing some contemporary techniques in optimization - namely, proximal gradient descent and FISTA.

The difference between what we’ve done above and what we are going to do is that instead of regularizing the nonsmooth part, we are going to descend with the smooth part and use the proximal information of the nonsmooth part. For a differentiable function $f$, recall that the gradient update $x^+ = x - t\cdot \nabla f(x)$ is derived using the quadratic approximation (replace $\nabla^2 f(x)$ by $\frac1t I$)

\(\begin{align*}

x^+ &= \arg\min_z \ f(x) + \nabla f(x)^T(z-x) + \frac{1}{2\gamma}\|z - x\|^2_2 \\

&= \arg\min_z \ \tilde{f}_\gamma(z).

\end{align*}\)

However, our function is decomposable, and we can use that to our advantage. From above, recall that our functional is

\(F(u) = \mathcal{J}(u) + \mathcal{I}(u)\)

where $\mathcal{J}(u)$ is smooth and $\mathcal{I}(u)$ is nonsmooth. Let’s use the quadratic approximation for the differentiable function $\mathcal{J}(u)$ where $\nabla^2\mathcal{J}(u)\approx \frac1\gamma I$:

\(\begin{align*}

u^+&=\arg\min_z \ \tilde{\mathcal{J}}_t(z) + \mathcal{I}(z) \\

&= \arg\min_z\mathcal{J}(u) + \nabla\mathcal{J}^T(z-u) + \frac{1}{2\gamma}\|z - u\|_2^2 + \mathcal{I}(z)\\

&= \arg\min_z\underbrace{\mathcal{J}(u) - \gamma\|\mathcal{J}(u)\|^2}_{\text{adds nothing}} ... \\

&... + \underbrace{\nabla\mathcal{J}^T(z-u) + \frac{1}{2\gamma}\|z - u\|_2^2 + \gamma\|\mathcal{J}(u)\|^2}_{\text{complete the square}} + \mathcal{I}(z)\\

& = \arg\min_z \ \frac{1}{2\gamma}\|z - (x - \gamma\nabla\mathcal{J}(u))\|^2_2 + \mathcal{I}(z).

\end{align*}\)

Intuitively, you can think of staying close to the gradient update for $\mathcal{J}(u)$ and making the value of $\mathcal{I}(u)$ small. Unfortunately, this is a first-order algorithm, so you will descend with the computational speed of computing a gradient but with the accuracy only up to that gradient. Typically, you choose $\gamma$ to be $\gamma<1/L$ for $L$ the Lipschitz constant of $\nabla\mathcal{J}(u)$.

Proximal Hessian algorithms exist but come with their own set of problems, so we will explore those later. However, various speedups exist for proximal gradient descent; Fast Iterative Shrinkage Thresholding Algorithm (FISTA) is also written up below. There is also a tonne of monotone operator theory and splitting algorithms to explore if we decide to do so later as well.

#Try the proximal gradient stuff

def update_search_direction(A, u):

Q = u.function_space()

φ, ψ = firedrake.TrialFunction(Q), firedrake.TestFunction(Q)

M = φ*ψ*dx

A = A(u)

dA = derivative(A, u)

φ=firedrake.Function(Q)

firedrake.solve(M == dA, φ, firedrake.DirichletBC(Q,0,'on_boundary')) #added boundary conditions

return φ

def proxgrad(γ, Fcn, u, proxG, max_iter = 1000, ε=1e-8):

gradFcn = firedrake.Function(u.function_space())

u_ = u.copy(deepcopy=True)

ν = γ

k = 1

err = 100

f = Fcn(u)

gradFcn = update_search_direction(Fcn, u)

feval = 1

his=[assemble(f)]

while k<max_iter and err>ε:

u = proxG(u_-ν*gradFcn, g)

f = Fcn(u)

gradFcn = update_search_direction(Fcn, u)

feval+=1

err = norm(u-u_)

u_=u.copy(deepcopy=True)

k+=1

his.append(assemble(f))

if k%100==0:

print(k, assemble(f), err)

return u, his, feval

def proxgrad_lnsrch(γ, Fcn, u, proxG, max_iter = 1000, ε=1e-5):

gradFcn = firedrake.Function(u.function_space())

u_ = u.copy(deepcopy=True)

Q = u.function_space()

ν = γ

k = 1

#do first iterate

f = assemble(Fcn(u))

gradFcn = update_search_direction(Fcn, u)

u = proxG(u_-ν*gradFcn, g)

G = u-u_

err = norm(G)

feval = 1

his=[f]

while k<max_iter and err>ε:

while f>assemble(Fcn(u_) - ν*inner(gradFcn, G/ν)*dx) + .5*norm(G)**2:

ν.assign(.5*ν)

u = proxG(u_-ν*gradFcn, g)

f = assemble(Fcn(u))

G = u-u_

feval+=1

u = proxG(u_-ν*gradFcn, g)

err = norm(G)

f = assemble(Fcn(u))

gradFcn = update_search_direction(Fcn, u)

u_=u.copy(deepcopy=True)

k+=1

his.append(f)

if k%50==0:

print(k, f, err, assemble(interpolate(ν,Q)*dx))

return u, his, feval

#setup and run the proximal gradient

u = firedrake.Function(Q)

γ = Constant(1.0)

u_pgl, his_pgl, fevalnum_pgl = proxgrad_lnsrch(γ, Action, u, proxReg)

50 0.21688921807790418 0.000522681330502645 3.8146972656248857e-06

100 0.20204651403135584 0.000522681330502645 3.8146972656248857e-06

150 0.19332342919475282 0.000522681330502645 3.8146972656248857e-06

200 0.1868550509028915 0.000522681330502645 3.8146972656248857e-06

250 0.18159764800068912 0.000522681330502645 3.8146972656248857e-06

300 0.17713408992522042 0.000522681330502645 3.8146972656248857e-06

350 0.17324964523754144 0.000522681330502645 3.8146972656248857e-06

400 0.16980905293142673 0.000522681330502645 3.8146972656248857e-06

450 0.16672405157371264 0.000522681330502645 3.8146972656248857e-06

500 0.1639325667885705 0.000522681330502645 3.8146972656248857e-06

550 0.1613871147410194 0.000522681330502645 3.8146972656248857e-06

600 0.15904759861100323 0.000522681330502645 3.8146972656248857e-06

650 0.15688300621817441 0.000522681330502645 3.8146972656248857e-06

700 0.15487122590573335 0.000522681330502645 3.8146972656248857e-06

750 0.1529942440133643 0.000522681330502645 3.8146972656248857e-06

800 0.1512366270335444 0.000522681330502645 3.8146972656248857e-06

850 0.14958579063031174 0.000522681330502645 3.8146972656248857e-06

900 0.14803079771284683 0.000522681330502645 3.8146972656248857e-06

950 0.14656220349864088 0.000522681330502645 3.8146972656248857e-06

1000 0.14517224347829444 0.000522681330502645 3.8146972656248857e-06

Solution

firedrake.plot(u_pgl,cmap='magma', plot3d=True)

<IPython.core.display.Javascript object>

<matplotlib.axes._subplots.Axes3DSubplot at 0x7f79da7e37f0>

PG - How well does it fit the obstacle?

δpgl = firedrake.interpolate(max_value(g - u_pgl, 0), Q)

firedrake.plot(δpgl,cmap='magma', plot3d=True)

print('Obstacle error: ', firedrake.assemble(δpgl * dx) / firedrake.assemble(g * dx))

<IPython.core.display.Javascript object>

FISTA

def FISTA(γ, Fcn, u, proxG, ε = 1e-5, max_iter = 300):

u_ = u.copy(deepcopy=True)

v = u.copy(deepcopy=True)

ν = γ

t = 1.0

tk = t

k = 1

err = 100

f = assemble(Fcn(u))

gradFcn = update_search_direction(Fcn, v)

feval = 1

his = [f]

while k<max_iter and err>ε:

u = proxG(v - ν*gradFcn, g)

tk = t

t = 0.5*(1.0 + sqrt(1.0+4.0*tk**2))

v = interpolate(u + ((tk-1.0)/t)*(u - u_), u.function_space())

f = Fcn(u)

gradFcn = update_search_direction(Fcn, v)

feval+=1

err = norm(u-u_)

u_=u.copy(deepcopy=True)

k+=1

his.append(assemble(f))

if k%10==0:

print(k, assemble(f), err)

return u, his, feval

def FISTA_lnsrch(γ, Fcn, u, proxG, ε = 1e-5, max_iter = 300):

u_ = u.copy(deepcopy=True)

v = u.copy(deepcopy=True)

ν = γ

t = 1.0

tk = t

k = 1

err = 100

feval = 1

his = []

while k<max_iter and err>ε:

gradFcn = update_search_direction(Fcn, v)

u = proxG(v-ν*gradFcn, g)

G = u - v

while assemble(Fcn(u)) > assemble(Fcn(v) + inner(gradFcn, G)*dx + interpolate(.5/ν*norm(G)**2, Q)*dx):

ν.assign(.5*ν)

u = proxG(v-ν*gradFcn, g)

G = u - v

tk = t

t = 0.5*(1.0 + sqrt(1.0+4.0*tk**2))

v.assign(u + ((tk-1.0)/t)*(u - u_))

feval+=1

err = norm(u-u_)

u_=u.copy(deepcopy=True)

his.append(assemble(Fcn(u)))

if k%50==0:

print(k, assemble(Fcn(u)), err,assemble(interpolate(ν,Q)*dx))

k+=1

return u, his, feval

#setup and run the FISTA

u = firedrake.Function(Q)

γ = Constant(1.0)

J = Action(u) + Regularization(u,γ)

contraction = 1.0

u_fl, his_fl, fevalnumfl = FISTA_lnsrch(γ, Action, u, proxReg)

50 0.15558849726694624 0.00041051190775377787 7.629394531249771e-06

100 0.11785076594470839 0.0003632463078800196 7.629394531249771e-06

150 0.10725491732514654 0.00023699902343820458 7.629394531249771e-06

200 0.10771406462520358 6.214585644621353e-05 7.629394531249771e-06

250 0.10750606345065795 6.560751144910141e-05 7.629394531249771e-06

FISTA - Solution

firedrake.plot(u_fl,cmap='magma', plot3d=True)

<IPython.core.display.Javascript object>

<matplotlib.axes._subplots.Axes3DSubplot at 0x7f79cf977f60>

FISTA - How well did we match the obstacle?

δfl = firedrake.interpolate(max_value(g - u_fl, 0), Q)

firedrake.plot(δfl,cmap='magma', plot3d=True)

print(firedrake.assemble(δfl * dx) / firedrake.assemble(g * dx))

<IPython.core.display.Javascript object>

print('MY-reg vs FISTA', norm(u_nc - u_pgl))

print('MY-reg vs Prox', norm(u_nc - u_fl))

print('Prox vs FISTA', norm(u_fl - u_pgl))

print('\n')

print('J(u):')

print('FISTA - ', his_fl[-1])

print('Prox - ', his_pgl[-1])

print('MR-reg - ', assemble(Action(u_nc)))

print('\n')

print('I(u):')

print('FISTA - ', norm(g-u_fl))

print('Prox - ', norm(g-u_pgl))

print('MR-reg - ', norm(g-u_nc))

MY-reg vs FISTA 0.0313388675986856

MY-reg vs Prox 0.001331370241705098

Prox vs FISTA 0.03221135098734603

J(u):

FISTA - 0.10694045946880043

Prox - 0.14517224347829444

MR-reg - 0.10446929907255309

I(u):

FISTA - 0.05010225767073729

Prox - 0.021826279356792835

MR-reg - 0.04920471759233756

#setup and run the proximal gradient

u = firedrake.Function(Q)

γ = Constant(1e-5)

J = Action(u) + Regularization(u,γ)

contraction = 1.0

u_pg, his_pg, fevalnum = proxgrad(γ, Action, u, proxReg)

100 0.19509158392272552 6.178258675243559e-05

200 0.170133804097906 4.175868255428276e-05

300 0.1565618193376643 3.307847151687289e-05

400 0.1474239552403334 2.7941254430279287e-05

500 0.14065545679605587 2.4426340886692934e-05

600 0.13537012383058378 2.1801840670765223e-05

700 0.13109783972047526 1.9730107777740474e-05

800 0.12756954552549618 1.7985121300190773e-05

900 0.12462134842720066 1.6477916718467577e-05

1000 0.12213617255583142 1.5152757297654973e-05

Solution

firedrake.plot(u_pg,cmap='magma', plot3d=True)

<IPython.core.display.Javascript object>

<matplotlib.axes._subplots.Axes3DSubplot at 0x7f79cc483a20>

How well do we match the obstacle?

δpg = firedrake.interpolate(max_value(g - u_pg, 0), Q)

firedrake.plot(δpg,cmap='magma', plot3d=True)

print(firedrake.assemble(δpg * dx) / firedrake.assemble(g * dx))

<IPython.core.display.Javascript object>

#setup and run the FISTA

u_f = firedrake.Function(Q)

γ = Constant(1e-6)

J = Action(u_f) + Regularization(u_f,γ)

contraction = 1.0

u_f, his_f, fevalnum = FISTA(γ, Action, u_f, proxReg)

10 0.35333448594582123 0.00018517445104196335

20 0.2998912850738678 0.00017183243326126526

30 0.26901481807728617 0.0001665441993947033

40 0.247064018382059 0.00016494458383237734

50 0.23005770615964077 0.00016375496509659536

60 0.21632182133080252 0.00016206667623639868

70 0.20495194284216262 0.00016033824698708882

80 0.19528364374416185 0.00015883373240513406

90 0.1869013328048592 0.00015738905797757364

100 0.17954975256410755 0.00015585407719869473

110 0.17304611799737823 0.0001543156984593124

120 0.16723280702936189 0.00015279870855605004

130 0.16199637714242543 0.000151348584449328

140 0.15724421556219567 0.00014994781583127796

150 0.15290635466903102 0.0001485703720695318

160 0.14892985541254217 0.00014721929893667354

170 0.14526756300429416 0.0001458867291838844

180 0.14188318620613574 0.0001445749667316996

190 0.13874590808062814 0.000143309624110854

200 0.13582353061510194 0.0001420939768468919

210 0.13309209364011107 0.00014091528643703262

220 0.13053347405862725 0.00013976140783658999

230 0.12813188908462025 0.00013863281697732373

240 0.1258728921271955 0.00013752468027841984

250 0.12374761557950595 0.00013641281028126847

260 0.12175461696335879 0.0001352404036086386

270 0.11989750615173697 0.0001339321589572722

280 0.11818028704117944 0.00013241544357216894

290 0.11660695587249105 0.00013063540410141223

300 0.11517932719982558 0.00012856411428243593

Solution

firedrake.plot(u_f,cmap='magma', plot3d=True)

<IPython.core.display.Javascript object>

<matplotlib.axes._subplots.Axes3DSubplot at 0x7f79c47487b8>

How well did we match the obstacle?

δf = firedrake.interpolate(max_value(g - u_f, 0), Q)

firedrake.plot(δf,cmap='magma', plot3d=True)

print(firedrake.assemble(δf * dx) / firedrake.assemble(g * dx))

<IPython.core.display.Javascript object>

]]>